The Middle Outcome

Preface (Optional)

Let’s get the hardest part out of the way first: there’s things we all do in our own lives that are not just suboptimal, but fundamentally against our morals. Not just things we simply disregard in the discourse of our lives, but ways in which we are actively contributing to making the world less aligned with our values. The are layers of obfuscation, but the thread is often simpler than we want to acknowledge. This distills down to a simple choice of how we carry ourselves and the decisions we make. Do we take personal responsibility and agency to try to shift the outcomes for the broader good, or accept the local optimality of our choices?

In order to have an open conversation about the trajectory of AI and the broader impact on the world, it’s important to recognize that our position is inherently biased. It is far less taxing to craft a narrative to explain why the risks are not risks or why our choices do not proliferate risks. In other words, we at naturally inclined to defend our position. In this essay, I take the position not as a doomer or a techno-optimist. Instead, I believe that the truth lies somewhere in the middle. The most adapt analogy I can provide is that of the law of entropy. There are simply far more possible futures that are some type of middle ground, and so few futures that look like doom or utopia. Thus, rather than attempt to publish another polarizing piece, I invite you to consider why, in fact, the middle ground option might be the most realistic.

Today, the unprecedented scale of AI investments are justified through possibility of “AGI”. This mythical land promises a “country of geniuses in a data center”. The leaders of AI are explorers chartering expeditions to this mythical, uncharted, and still hypothetical world. Yet, what exactly lies there? The terminology here remains a bit blurry (artificial general intelligence vs. artificial super intelligence), but let me instead offer three core properties to reason about what is required extreme outcomes:

- Generality: the ability to perform any task above human level

- Autonomy: independent goaling, planning, and executing without human intervention

- Self-Improvement: the ability to continually enhance it’s own capabilities

In no way are these properties necessary for AI to transform the world, that much we are seeing today. Yet, it is hard to imagine living in a world with this sort of AI possessing these capabilities that will not either shepherd us to utopia or own demise. Is AI going to continually get better, achieve these properties, and create the ultimate consolidation of power? How close are we? And what’s missing to get there?

- Generality.

Of the three, generality provides the strongest evidence. We keep expanding our plethora of benchmarks for LLMs and increasing task complexity for AI agents…and they keep getting better and better. As early as 2024, LLM’s were outperforming humans in tasks, and this pattern seems to be consistent across domains. Perhaps one of the most compelling single examples is AlphaDev discovering faster sorting algorithms.

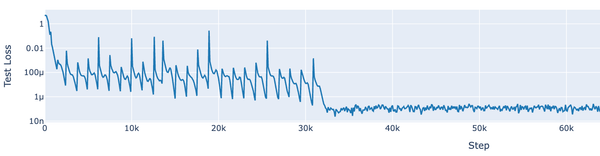

As I’m a firm believer in “books that could have been an essay”, I’ll avoid the same verbosity. Whether it’s Sutton’s bitter lesson (brute force beats cleverness) or Dario-endorsed scaling laws, there’s a clear pattern when it comes to increasing task performance: if you add data and compute, the models keep getting better.

- Autonomy

The case for autonomy is more nuanced than generality, and requires examining things beyond face value. On the surface, our agentic systems show exponentially increasing autonomy. This evidence demonstrates agents can work on increasingly complex goals without human intervention. From here, this gets extrapolated into “closing the loop” for an agent to continuously pursue a goal. One particularly elegant example is Karpathy’s Auto-research. This work uses an AI agent to continually seek to improve an AI model – the very thing powering the agent itself. This feels like genuine self-improvement, where the agent can take whatever actions to improve its objective. Have we created something with a “mind of its own” and can take continuous actions?

This is probably the scariest scenario to think about, especially when you put in context the things we’ve seen AI agents do. For example, we’ve heard stories of AI blackmailing people to avoid getting itself deleted. A fully autonomous AI with a willingness to undermine humanity? The doomer case writes itself here.The missing part of the story these captivating tales leave out is what prompted the loop in the first place. Whether it’s auto-research or retaliatory examples, LLM’s are next token predictors. Without a token to kick off the loop, nothing happens. For that doomer example of AI blackmail, it was a manufactured scenario not so different from the trolly problem.

This is akin to the a classical problem in reinforcement learning: setting the “reward function”. When you specify an objective (i.e., the reward function) without constraints, the only constraints violated are those within our minds. This isn’t nefarious AI trying to take over, it’s a shortest path to target without clear boundary conditions.

So, when I say it’s nuanced – there’s a mixed bag here. On one hand, you can of course allow any automated system (LLM or otherwise) to control things, where improper setup can yield sufficiently bad outcomes. It’s not clear the delta here is the LLM being nefarious, it’s that we’re granting control to an unpredictable and imperfect actor.

- Self-Improvement

Finally, we arrive at the idea that has captivated me since my early 20s: self-adaptive systems which continually improve. My PhD, “Introspective Computing”, is fundamentally about building systems that can learn from their own environment and actions to make choices with continually improve themselves. Reinforcement learning is the paradigm that generalizes this idea, and it’s used heavily in the tuning of LLM’s today. Only, there’s one critical detail: it’s used during the training period before the model is deployed for inference.

The AI’s you work with today have two distinct phases: training and inference. Once a model is deployed, it’s reasoning is locked in. It’s weights are frozen. It’s vocabulary of tokens is fixed. It knows what it knows. Early on, in-context learning was popularized as a means to overcome this. The idea is to use model’s working context window as a means to inject any missing information. In practice, with a powerful enough framework to continuously acquire, index, and retrieve knowledge efficiently, new capabilities can be unlocked post-training.

Yet, this isn’t true self-improvement – rather, the model is simply applying its existing learned patterns onto a larger prompt. Nothing new is injected into the model itself. It’s like saying that f(x) isn’t providing the desired result y, so to fix f(x), we must modify x, until f(x) = y. No need to improve f. What?

True self-improvement requires continual learning: updating the model's weights and representations over time. And we've yet to find compelling evidence on how to solve this. The simplest approaches to "keep teaching the model" yield catastrophic forgetting, where learning a new task destroys performance on previous ones. The learning process itself is inefficient: humans learn from a few examples, while machines require thousands to meaningfully update weights.

But I find the most interesting constraint to be that of representations. You and I can learn new symbols, assemble words, and associate a phrase with something of higher-order meaning (e.g. idioms). LLMs have a fixed vocabulary of tokens. They see the world as flat tokens with no mechanism to assemble them into higher-order structures. This is fundamentally why counting the R's in "strawberry" was a hard problem for GPT: it doesn't see the individual R's. With no way to continuously update weights and no way to refine representations, it's unclear how these systems can truly self-improve.

So, where does that leave us? Is the path ahead already forged or can we still steer? The most honest thing I can say is: I don't know. Nobody does. But I think it's worth trying.

What we do know is AI is a transformative technology. The capabilities of models will continue to improve. The complexity of tasks, the range of domains, and the degree of autonomy we're able to abdicate will continue to unlock new utility. But the part that turns a technology into something that replaces you, remains fundamentally unsolved.

That doesn't mean there won't be extreme displacement of the workforce. Like technologies before, entire sectors of the workforce may be reduced to a fraction of their size. Farmers, artisans, and craftsman yielded as machines replaced their talents in the industrial revolution. Travel agents, store clerks, and newspapers closed their shops as the internet replaced them at a fraction of the cost. Lots of people lost.

But lots of people won too. Those who thrived weren't the ones who bought the hype or ran from it. They were the ones who understood the actual tool and learned to build with it. The best thing you can do right now isn't to panic or to wait for the singularity. It's to keep your edge. Use AI to learn new skills faster. Form your own opinions instead of outsourcing your thinking to the hype cycle. And appreciate the one advantage you still have that no model does: you can actually learn.

In a world racing toward artificial general intelligence, the most underrated move might just be investing in the natural kind.