What Scaling Laws Meant for AI in 2020

Some research papers have such intuitive titles it feels like we've read them without even opening them up. Even listening to Dario's podcast interview with Lex Friedman left me feeling as though I understood the gist of scaling: Throw more compute at the problem, get better results. While that aligns with Sutton's "Bitter Lesson", the "second order" elements are far more interesting.

Consider two key "levers" you might target to increase model performance.

- Most obviously, you can feed the model more data (D, dataset size). Bigger data set size, more training time, you'd expect more learning to happen. The crux here is if we keep training on the same data, will we overfit?

- Second, you could try scaling up the model itself (N, number of parameters) Since we're adding exponentially more parameters, this is going to make training more expensive. Thus if we are confined to a fix compute budget, the implication is that we're going to see less data for a more powerful model. Will the model be able to generalize?

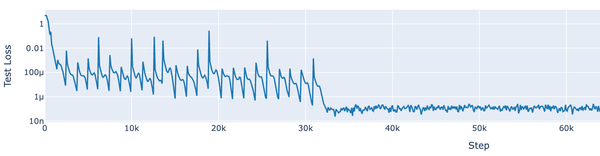

If you guessed "bet it all on large models", you'd be right – mostly. The scaling laws convincingly show that larger models perform better, even if fewer tokens are processed. However, since the model is larger it does cost more process those tokens. Nevertheless, as your compute budget increases - the optimal spending heavily favors N, such that the optimal model size increases by 5.4x for every 10x in compute, where as the training steps grow by only 1.07x [Kaplan et. al.]. In other words, 73% of the compute growth goes to model size! Comparatively, data scaling is sub-linear (D ∝ N^0.74).

This completely broke my intuition that bigger model = bigger data. The results actually suggest the reverse, that larger models are more "sample-efficient". Basically, big models learn patterns from the data sooner than small models! This raised a new compelling question for me: If these "big models" are learning faster, are they actually generalizing concepts?

"Language Models are Few-Shot Learners" provides a compelling answer. If we keep our compute-constrained hat on, we can reframe the question as: should we train smaller specialized models, or one giant model and hope it can do all the things? The answer is, again: big model (as long as it's a transformer).

The implications here are perhaps even more favorable, even if more intuitive. These big models can be made effective on specialized tasks by in-context "learning". It's worth noting that I find this terminology demeaning to "learning", since there are no model weight updates happening - only a growing input into the transformer. Semantic tangents aside - the point is these big models can perform a specific technique if shown a few examples in context. Show them a few examples in the prompt, and they are likely able to generalize the idea.

This is favorable, in my opinion, for a few reasons. First, it's really hard to generate specialist samples. Effective training requires thousands of samples to learn a specific concept, and in many cases that's just not practical. Second, specialist tasks evolve quickly. Are we going to train a fresh tax specialist model from scratch every year? Finally, it means that we can really take advantage of the aforementioned scaling laws to invest in big, powerful models without concerns of adaptability.

By the end of 2020 (when both of these works were published), I can only imagine the conviction frontier AI labs felt toward big models. Reviewing these works in 2025 left me pondering if "running out of tokens on the internet" was even a real problem if these models could generalize so well. If we correct Kaplan's predicted data wall from 1012 to 1014 tokens, we should expect to handle models of at least 16T parameters. If we believe the rumors that GPT-5 is 1.4T parameters, we're within an OOM of the that theoretical ceiling.

But here's the real tension: just two years later Chinchilla fundamentally challenged "big models over big data", and course-corrected the theory back to linear scaling. To add insult to injury, modern models aren't just scaling linearly (~20 tok/param), but often feeding exponentially more data per parameter (>500 tok/param)! So whether you believe the original hype, the updated linear analysis, or the practical realities - the issue remains: where are we going to get more data?But that's for another day.